00 // TL;DRThe Transformation, In One Grid.

Each iteration fixed what the previous one couldn't see. The product that finally works inherited six lessons in order. The rest of this post is how each lesson got learned.

Don't fight what the model wants to do. Code.

Large language models are really good at writing code. They are worse at manipulating proprietary application state, opaque DOM trees, or visual canvases whose semantics they can't see. Every iteration in this post is, at its core, a story about either fighting that truth or accepting it.

The iteration that finally works (Iteration 6) accepted it. It asked the model to do what the model is already great at, which is writing a React component, and let the rest of the platform absorb the output. Everything before that was, in different ways, fighting it.

Between late 2023 and May 2026, my team at Webflow shipped, killed, or rebuilt our agentic visual development experience six times.

- The first version we called AIDA (AI Design Assistant), and it was dead on arrival.

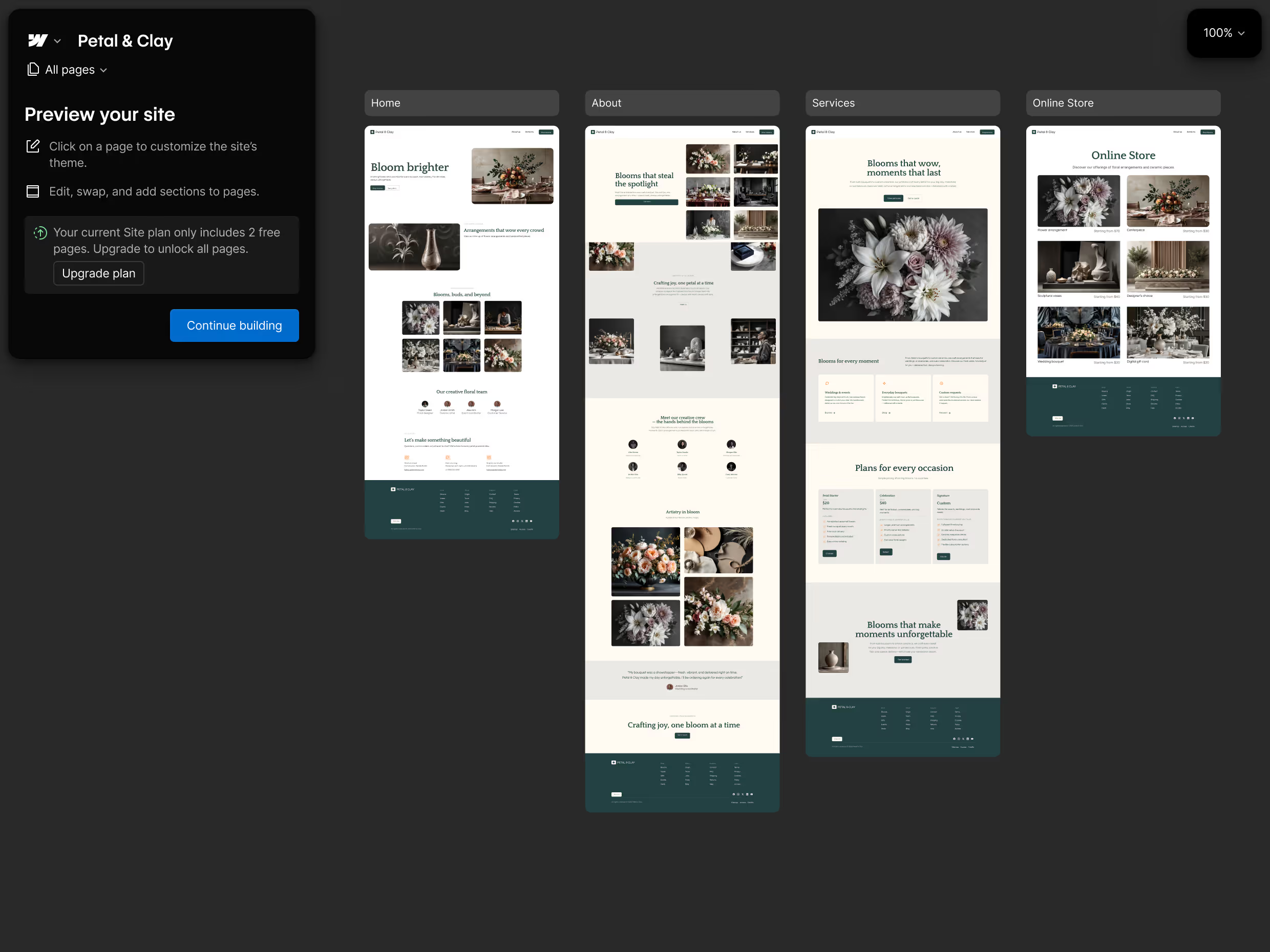

- The second version we called the AI Site Builder. A one-shot site-generation tool that shipped publicly in February 2025 and grew to 22% of all new Webflow sites within a year.

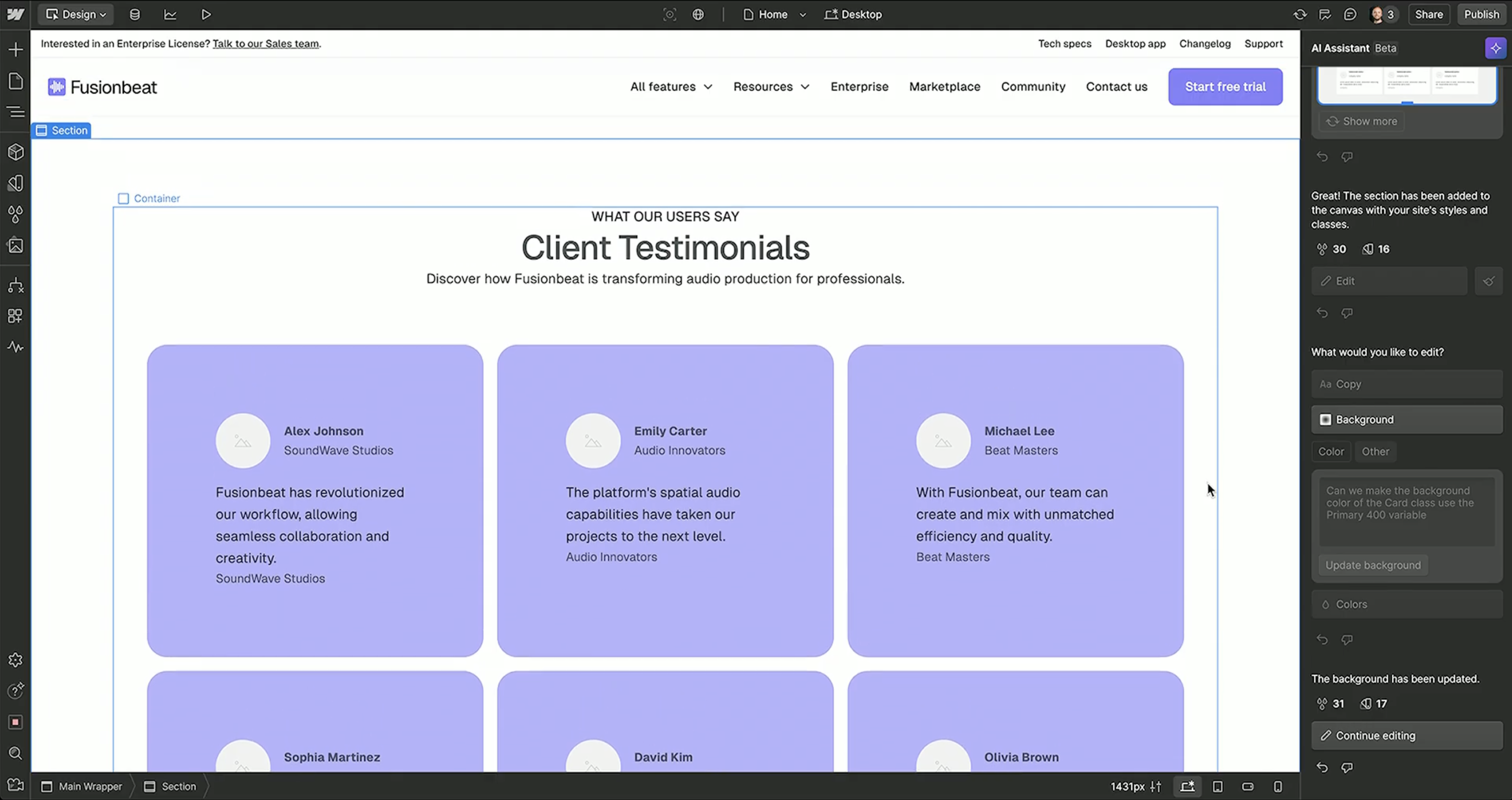

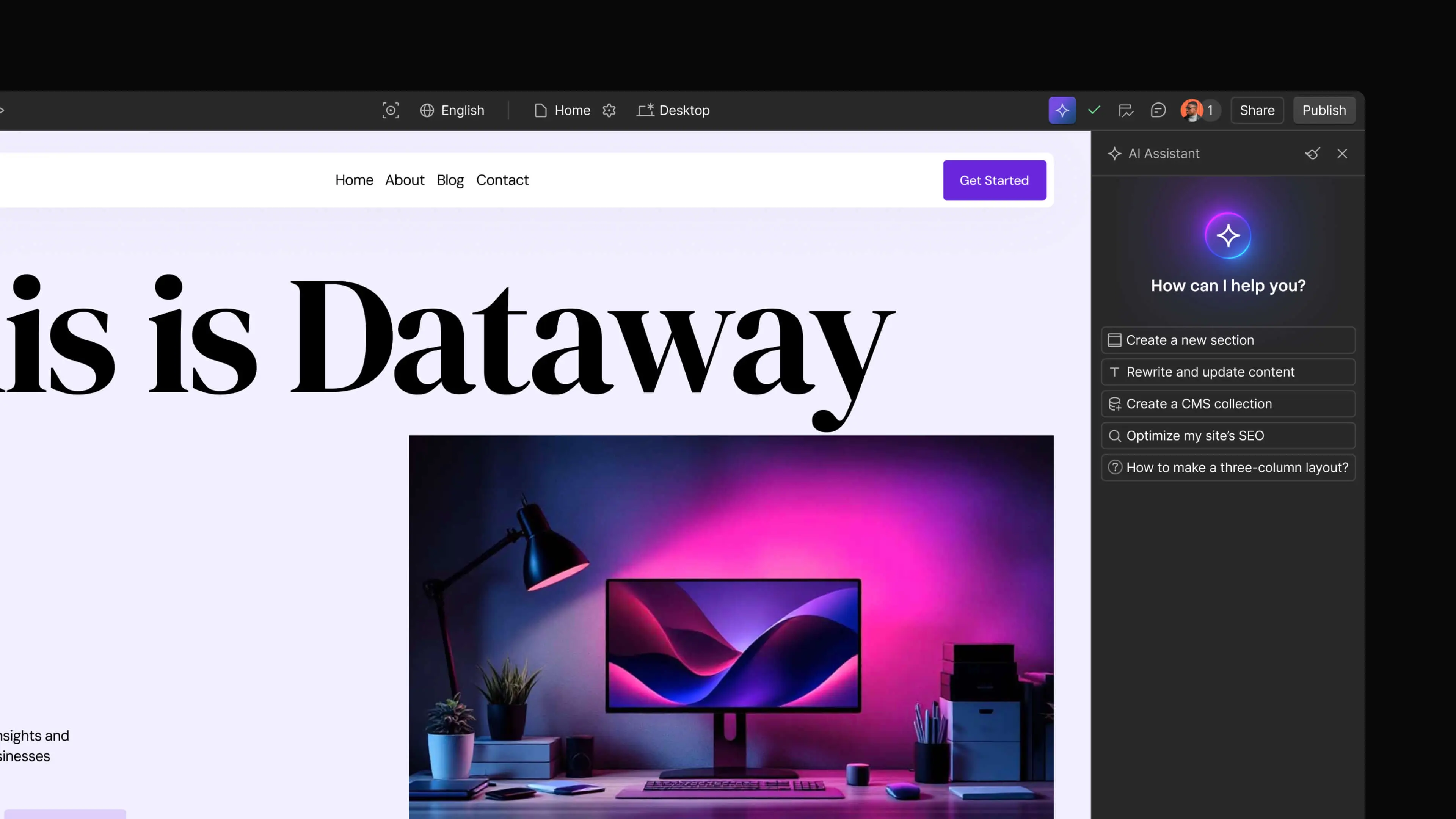

- The third version we called the AI Assistant, and we shipped it to public beta in October 2025. On its biggest stage, it struggled in front of fifty thousand people.

- The fourth version we called App Gen (project codename Orion), and we shipped it publicly in November 2025, then quietly paused development on it in April 2026.

- The fifth version, AI Code Components, went GA on April 30, 2026, and we nailed it.

- And in May 2026, those products started converging. The AI Assistant rolled out on every AI Site Builder–generated site, and the journey, for the first time, became continuous.

Six iterations. Six lessons. The lessons are the point of this post, because none of them are the obvious ones, and each of them changed how I now think about building AI products.

This is Part 1 of a three-part series called Becoming Agentic.

Part 1 is the iterations and the lessons building into the headwinds of the models. Part 2 is the harness work that made it possible to ship agents that work into the Enterprise. Part 3 is the closed-loop agents that compound on top of all of it.

Iteration 01 // 2023 → Late 2024AIDA. The Wrong Surface.

The honest version of how AIDA started is this. In mid-2023, I'd been watching the same eye-popping AI demos everyone else was watching.

Generate a landing page for my startup in seconds with a single prompt … boop bee boop … here's a 🔥 landing page with some purple boxes.

ChatGPT had been out for less than a year. Every product leader I respected was saying the same thing in private. We have to figure out where AI fits, and we have to do it yesterday. I felt that pressure. My team felt it. Our customers felt it.

So we built AIDA. The pitch was clean. A chatbot that lived in the sidebar of Webflow's visual canvas, where you could type what you wanted and the agent would build it. The moat was clear. Nobody else had our canvas. So that's where our agent would live. We staffed it. We built it. We launched at Webflow Conf 2024.

And it was a GREAT demo that almost gave me a heart attack the night before when our model provider went down (but that's a story for another day!).

But a great demo != a great product, and it didn't ship as planned.

In November 2024, six weeks from the planned launch, the team walked our leadership group through what was actually working in AIDA versus what we'd told ourselves was working. It wasn't enough.

A few weeks later, in December 2024, one of the engineers on the team published a public retrospective on the Webflow blog. His verdict, in his own words: "It turned out we were extremely wrong about all of the assumptions above." He named four traps the team had walked into. Capability overreach, demo enthusiasm, the marketing moment, and moat-driven development. The post is worth reading on its own.

I want to focus on the fourth trap, moat-driven development. It's the one that produced this iteration's lesson, and it has a name from a book that predates this entire era.

Twenty-five years ago, Clayton Christensen wrote The Innovator's Dilemma. His core argument, compressed: established companies fail not because they stop being good at what they do, but because they keep being good at it. They optimize for their existing customers, their existing performance metrics, and their existing moat. Disruptive innovations show up looking worse on traditional metrics, and the incumbents, rationally, decline to chase them. By the time the disruption is visible on the metrics the incumbents track, it's too late.

AIDA is what happens when you read Christensen and still fall into the dilemma anyway, because the trap is built into how you think.

We'd designed AIDA around our moat (the visual canvas) instead of around an emerging customer behavior. The canvas was a genuine differentiator. But it was made for Webflow experts to manipulate.

Meanwhile, the customer behavior that AI was actually unlocking was happening at a different level entirely. The desire to apply natural language to any element on the page, at the moment of editing, without context-switching to a separate chat panel. Customers had been telling us, in our own research, what they actually wanted. One survey respondent put it like this: "ability to select any element and enter prompt anywhere from page to section to image to text." Another: "Deeper integration into workflow. Not just another UI thing to click on, something you can prompt and explore with so it actually adds new value to the workflow."

We had the visual canvas. They wanted to operate on the canvas, not adjacent to it. We had a chat panel because chat panels were the surface our applied AI team knew how to ship and our moat was easiest to wrap around. They wanted in-place edits because that's how they were learning to work with Cursor, with ChatGPT inside their browser, with Claude in their terminals. The behaviors AI was creating were not behaviors our existing moat addressed. We were building a sustaining innovation in a moment that demanded a disruptive one. We were stuck in our own trappings.

Your moat is the most dangerous place to start. The Innovator's Dilemma applies to AI.

Building the wrong thing inside your existing advantage feels like building on your strength.

The moat is real. The moat is not the product. The customer's emerging behavior is the product. If your moat amplifies that behavior, you have something compounding. If it just declares an existing advantage in an unfamiliar surface, you have decoration. And Christensen will, eventually, find you in his footnotes.

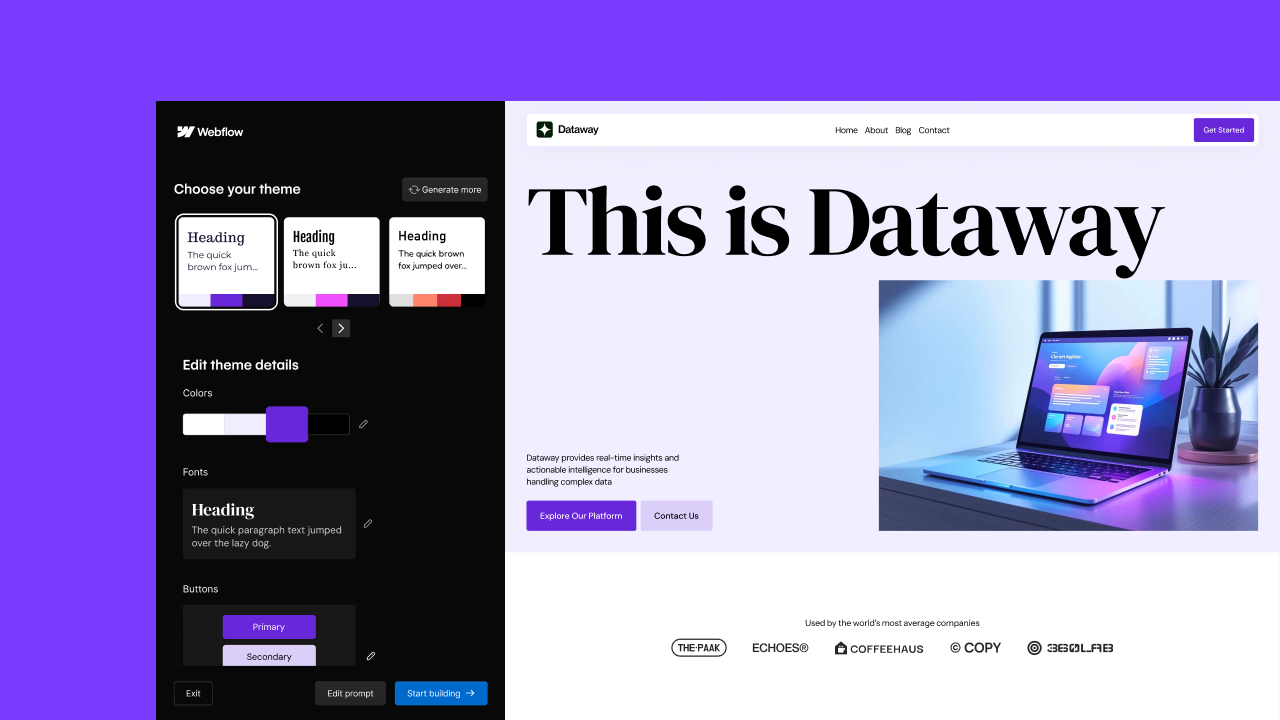

Iteration 02 // Feb 27, 2025 / Public BetaAI Site Builder v1. Bounded Scope, But Only at the Starting Line.

In the immediate aftermath of pulling AIDA, six weeks from the December 2024 pivot decision, a different team at Webflow shipped a different kind of AI product.

We called it the AI Site Builder (AISB … we love our acronyms internally). The pitch was the opposite of AIDA's. Instead of an open-ended chatbot that could touch anything in the Designer, AISB did one thing.

You arrived at webflow.com/ai-site-builder, typed a description of the site you wanted, and got a multi-page site with copy, images, and animations, generated in seconds.

It worked. Genuinely, immediately, dramatically.

AIDA's failure was about an unbounded agent in the wrong surface. AISB's success was a bounded agent doing one well-scoped thing. Generate a site, once, from a prompt. We'd narrowed the agent's job to something the model could reliably do, on a surface that was itself bounded (a single startup flow at webflow.com/ai-site-builder, not the open canvas). And the customer behavior was real. People did want to type a prompt and get a site.

The Innovator's Dilemma lesson from AIDA still held. But the scope-narrowing was the corrective.

What AISB couldn't do was follow the customer past generation. What we learned was that AISB was a site starter, not a platform. The agent did its job, then went home. Everything past site generation was back to manual work in the Designer. Editing the structure, evolving the design, adding new sections, refining components. AISB had narrowed the agent's scope correctly, but the scope itself was the floor of the product, not the ceiling. The customer wanted the agent to come with them past the first prompt.

A single-shot agent is a tool, not a platform.

Bounded scope works. But the agent has to come with the customer past the first prompt, or you've built a moment, not a system.

This is the lesson I think the most ambitious AI products of 2024 and 2025 underweighted. Demoware is bounded by definition. One prompt, one output, one moment of magic. The product is what the agent does for the customer on day 30, day 60, day 90, when the novelty is gone and the customer is doing real work. AISB was great on day 1. It was invisible on day 30.

That gap is what made everything that followed (the AI Assistant, App Gen, AI Code Components) strategically necessary.

AISB also did something else that, in hindsight, matters more than the launch numbers. It gave us reps with real customers who were frustrated, and it gave us fuel to keep investing in the agentic platform while we figured the rest out.

Iteration 03 // Aug 2025 → End of 2025The First AI Assistant. Capability Without Trust.

If AIDA was the iteration we got wrong because we built in the wrong place, the next version was the one we got wrong because we built in the right place, and forgot that capable is not the same as trustable.

The team for the second AI Assistant didn't start from scratch in mid-2025. They started from what we'd already learned by shipping the Webflow MCP Server. The MCP work was the parallel story of 2024 that I'll get deeper into in Part 2 of this series. The short version, for now, is that MCP taught us three things that shaped what the AIA team built.

None of these would have been obvious if we'd tried to design AIA before shipping MCP.

First, the platform should be addressable as tools, not screens. Building MCP forced us to think of Webflow not as a set of UI surfaces but as a set of capabilities an agent could call. Each capability discrete, well-described, and idempotent. That thinking became AIA's tool registry: an in-Designer chatbot interface where any team at Webflow could register their tools as first-class agent capabilities. The first set were small (link agent, designer agent, Designer Extension API) and grew as other teams hooked in.

Second, external agents revealed customer demand before we built for it. Once MCP was live, Cursor and Claude users started building real things with our APIs. Landing pages, content workflows, bulk CMS operations, schema generation, asset management. Watching what they built told us exactly where customers wanted agentic capability, and where the gaps were. AIA inherited that demand signal. We were no longer guessing about the use cases. MCP usage data had given us the map.

Third, tool surface design matters more than tool count. Extending MCP to the Designer API in September meant consolidating dozens of raw Designer operations into a smaller, well-named, agent-callable surface. Too many tools blows the context window before the agent does anything useful. AIA inherited that constraint as a design principle. Keep the tool registry lean, even when teams want to register everything they own.

That inheritance is the part of this story I'm proudest of. AIA had a year of platform work behind it. The MCP team had taught us how to expose capability to agents, even if it hadn't yet taught us how to govern that capability for enterprise customers. (Governance is where AIA tripped, which is what this section is about.)

That architecture is still right. It's what every subsequent agent at Webflow stands on. By August 2025, the internal alpha of AIA was working well enough that we started discussing whether to put the public beta on the Webflow Conf 2025 keynote stage in September.

The week before Webflow Conf 2025, we GA'd the Designer API extension to MCP. The moment external developers could manipulate the Webflow canvas in real time through any agent. The reception was the strongest agentic signal we'd had to date. Within hours, third-party developers were shipping live demos. The community channel filled with examples.

"Yooo!!! Designer MCP!! I know what I'm making a video about today."

That was the kind of unprompted developer enthusiasm we'd been waiting two years for. The signal gave us conviction. If external developers could already manipulate the Designer canvas via MCP, and were excited about it, then surely a native in-Designer AI Assistant, sitting on the same APIs, was ready for a public moment. We put the AIA public beta on the Webflow Conf 2025 keynote calendar and started rehearsing.

The conviction was right about the technology. It was wrong about the stage-readiness of the product. The Designer MCP reception was telling us the capability surface worked. It was not telling us the product surface was ready to be the centerpiece of a fifty-thousand-person keynote. The in-Designer chatbot, the demo flow, the live choreography of an AI Assistant doing meaningful things in front of a giant audience. I read the signal too generously. That's the decision I'd undo, if I could pick one to undo, from the whole agentic story.

I won't relitigate the day of the keynote. The short version. I was on stage in front of about fifty thousand people, demoing the agent doing things it had done, correctly, eighty times in rehearsal. It didn't do the thing. Someone changed the prompt overnight, after I saw the final version of the generation.

The room got polite-quiet. I made a joke I don't remember. I handed off, walked into the green room, and looked at my phone. The internet had already started.

I sat with what had happened for a long time. What I eventually understood is that the agent on stage was capable. It could, in principle, do the thing I was asking. It had the tools. It had the context. It had the architecture. What it didn't have was the trust framework around it. Every Enterprise prospect we were talking to that summer had been telling us they needed one.

The Webflow Conf demo crystallized the gap publicly. But the people who'd been doing customer research all summer already knew it was there.

The lesson took the form of an Enterprise designer in one of our co-development interviews saying it plainly. "I'm really nervous to start generating tons of crap and then be like, this is good. I wouldn't feel comfortable being like, this is production ready without someone merging everything together."

Another participant, from a partner agency managing hundreds of enterprise sites on Webflow. "I don't want a random marketing person to just open a random AI session and be like, here is my project, do the updates and then have the AI hallucinate and destroy the entire website."

Capability is not trust.

The agent's power has to be matched by a control plane, or your customers will not let it near their production work.

The technical core of AI Assistant was sound. What was missing wasn't more model capability. It was the trust framework that made the capability usable.

Roles, permissions, sandbox-to-production, brand rules, granular review.

We had been building the foundation underneath all of this. The MCP server, the eval pipeline, the re-platformed CMS. We had not yet built the customer-facing trust surface. And until we did, the agent was going to fail in two predictable ways. Spectacularly on stage, and quietly in every enterprise conversation.

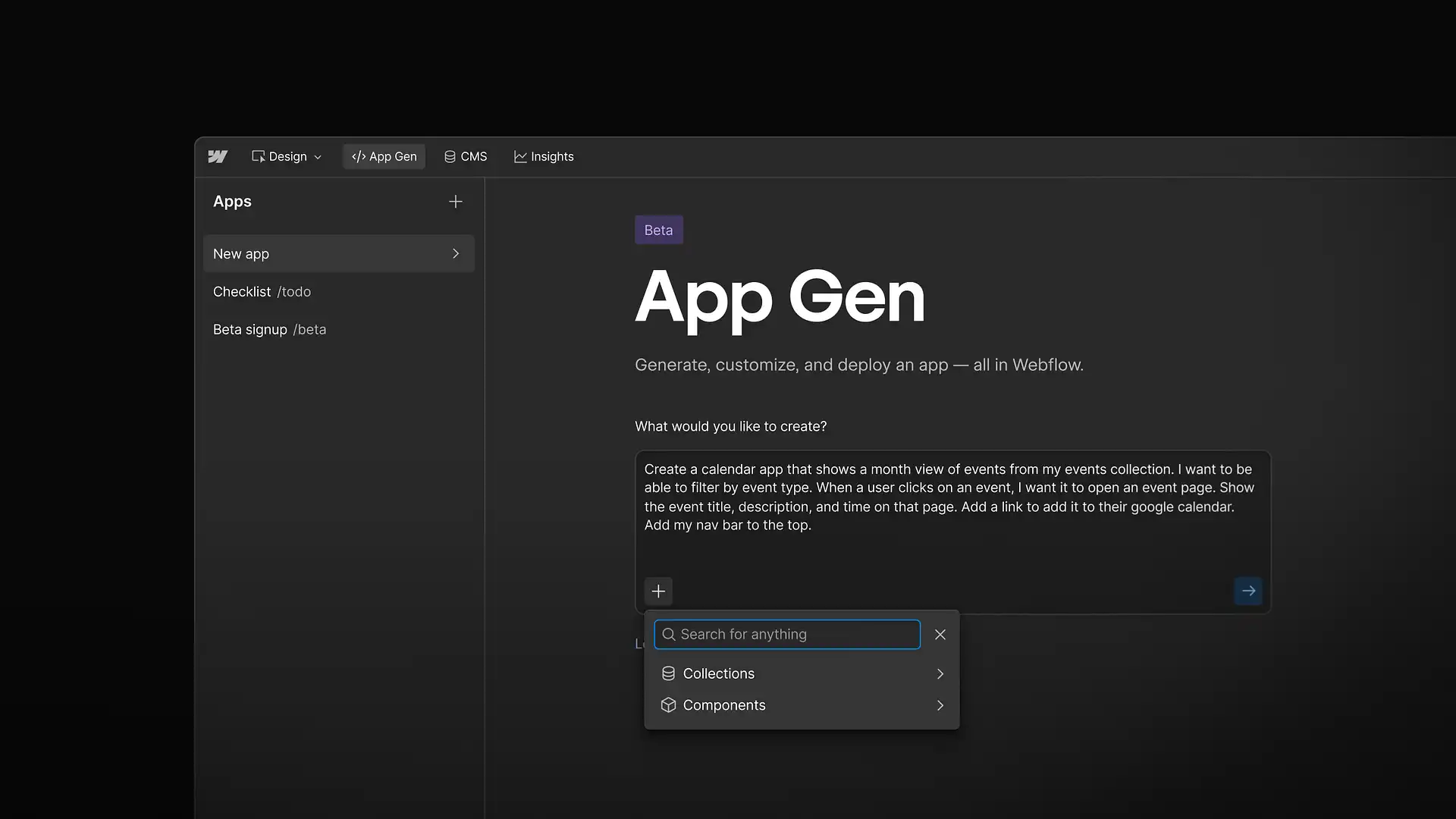

Iteration 04 // Nov 2025 → Paused April 2026App Gen. The Agent Next Door.

While the AI Assistant team was working through the trust gap I described above, a parallel team was building something else entirely. We called it App Gen. Internally we called the project Orion.

The pitch was clean. "Prompt to production" … customers describe an app in natural language, and Webflow generates a full-stack web app that gets deployed to Webflow Cloud and was natively integrated into our CMS.

It was a real product. People built real things on it. One early adopter sent us a note after we decided to pause it calling App Gen "one of the greatest products ever made" and asking how much it would cost to keep using it after we paused development.

But by January 2026, three months after the public launch, the team had started to come to a different conclusion than the one we'd shipped on. We had missed the mark, again.

What did we learn?

Going through the post-launch survey responses for App Gen reads like one long, polite version of the same sentence. Make this work inside my actual site, not next to it. The most common feature request on the survey was "Ability to embed an app within an existing Webflow page," marked must-have by virtually every respondent.

One developer wrote. "The product currently defaults to generating an entirely separate webpage, which is unsuitable because the app often requires native functionality within the existing environment."

The Webflow blog post that announced App Gen's pause put the customer voice in one sentence. "You wanted this kind of functionality as components you could reuse across any page, not just one-off generations."

That's the lesson.

App Gen produced whole apps. Discrete, one-off, standalone, generated in a separate sandboxed environment with its own database, its own deploy flow, its own everything.

What customers actually wanted was building blocks. Reusable, embeddable, in-place. Things they could drop into the sites they were already building. They didn't want a parallel product next door to Webflow. They wanted Webflow itself to absorb the new capability.

Don't build the agent next door.

If your customer's work lives in your main product, the agent's output has to live there too. The right unit of agent output is the unit your customer was already composing with.

This is the lesson I think most product teams shipping AI right now are about to learn, the hard way. The temptation when you're building an AI feature is to spin up a separate environment, a separate flow, a separate everything, because the agent is new and your existing surfaces don't yet know how to host it. That separate environment is the trap. Every customer interaction with it generates a context-switch tax. Every output it produces lives outside the work the customer was actually doing. The agent becomes a side product the customer visits occasionally instead of a capability that compounds with everything else in your platform.

The fix isn't more model capability. It's relocating the agent's output into the primitives your customer was already building with.

Which is exactly what we did next.

Interlude // Dec 2025 → Jan 2026Vibe Season.

While App Gen was wobbling internally, with the team gathering quality data and customers asking for things we hadn't built, our marketing team made a bet that turned out to matter as much as any product decision in this story.

From December 1, 2025 to January 1, 2026 (thirty-two days, holiday window, the slowest content month of the year) Webflow's marketing team launched a vibe-coded app every single day. Thirty-two apps in thirty-two days. Built by people across the company, mostly with App Gen, shared publicly across social channels with the simple framing. Here's what you can build with AI in Webflow today.

It was, by intent, a brand campaign. It turned out, accidentally, to be the most useful customer-research instrument we ran that quarter.

What the campaign did:

- It produced thirty-two specific, concrete examples of what was actually possible. Pricing calculators, event finders, quizzes, dashboards, location maps, job boards. Customers could see the surface area, not just imagine it.

- It kept the agentic story alive in public conversation during a launch-free window between Iteration 4 (App Gen, November) and Iteration 5 (AISB v2, February).

- It surfaced, by sheer volume, the patterns in what people actually wanted to build with AI in Webflow.

That last one was the part that turned out to matter.

The team running App Gen had been collecting customer feedback through the formal channels. Surveys, beta interviews, support tickets. The 32 apps gave us a parallel, less filtered signal. What does the internal Webflow community actually want to build with AI when you give them the camera and the megaphone for a month? And the answer wasn't standalone full-stack apps. It was interactive components for the sites we're already shipping. Calculators, calendars, filters, lookups. The kinds of things a marketer would want to drop into a landing page, reuse across multiple sites, and let a designer customize.

By the time the campaign closed on January 1, the conclusion was already visible. The customer ask wasn't App Gen. It was App Gen's output, but reusable, embeddable, native to the site. Three weeks later, the App Gen team formally redirected the roadmap "through the VCC lens." Three months after that, AICC went GA and App Gen was paused.

The 32 days of vibes campaign didn't kill App Gen. It told us, with thirty-two specific data points, what should replace it.

This is a pattern I now take seriously. Marketing campaigns are sometimes the highest-bandwidth customer research you'll do. When you give an internal team a daily content slot and a deadline, you don't get a polished brand story. You get an unfiltered view of what the people closest to the product actually want to make with it. The 32 apps were posted as marketing artifacts. They were read by the team as a product brief. Both readings were correct.

Iteration 05 // Feb 2026 / GAAI Site Builder v2. Deepen Before You Replace.

By early 2026, AISB had been running in public beta for almost a year. The numbers were good. 22%+ of all new Webflow sites started there, and the strongest customer enthusiasm we'd seen on any agentic product. The AISB team had a choice to make for the GA release in February.

They could spin up a new product, ride v1's success into "AISB 2.0, now does everything" territory, and try to make AISB into a full agentic platform that picked up where the one-shot left off. Or they could keep the bounded scope and deepen it.

They chose the second path. AISB v2, GA'd on February 5, 2026, was not a bigger product. It was a bigger one-shot. The bounded scope (one prompt → one site) didn't change. What changed was what fit inside that scope.

Three things changed.

Multi-page generation. The single most-requested feature from v1 beta. Customers had been getting a homepage from AISB and then doing manual work to build the rest of the site. v2 generated multi-page sites in one go. Same bounded "one-shot" structure, more output per shot.

IX3 animation packs, baked in. One of the consistent v1 critiques (and one I've quoted earlier in this post) was that the output "looked generic." Sites generated for very different businesses ended up with similar layouts. v2 added animation diversity so outputs got visual identity without expanding the agent's scope.

A simplified flow. v1's multi-step generation process (prompt, then style choice, then section variance, then preview) was the actual conversion bottleneck. v2 reduced it to: prompt → site structure preview → full site preview. Fewer choices, less friction, more customers actually completing the generation.

The result was a better lift in AISB conversions and a clean launch moment with no live-demo drama attached.

Deepen the bounded agent before you replace it.

Bounded scope compounds when you keep iterating inside the bounds. The temptation, when v1 works, is to graduate it into v2 by removing the bounds, to turn the bounded agent into a "platform." Resist that. Make the bounds bigger first.

This is the lesson I think product teams under-execute on. A bounded AI feature that works creates internal pressure to expand its scope into a "real" platform, and that expansion is usually where the second product fails. AISB v2 is the counter-example. Same product, same bounded one-shot, more value inside the bound. The team's discipline to not replace the bounded version bought us another year of compounding while we built the next layer of the agentic system separately. In AI Code Components, Iteration 6, which is up next.

The AI Assistant we'd been building alongside AISB v2, the one that struggled at WFC five months earlier, was the right next layer attempt. But it was a parallel track, not a replacement. AISB v2 kept being AISB v2. AIA was building toward what came after the one-shot ended. Both moved forward at once, and the moment in May 2026 when they finally connected (the subject of the Convergence section at the end of this post) was made possible by the team's discipline not to merge them prematurely.

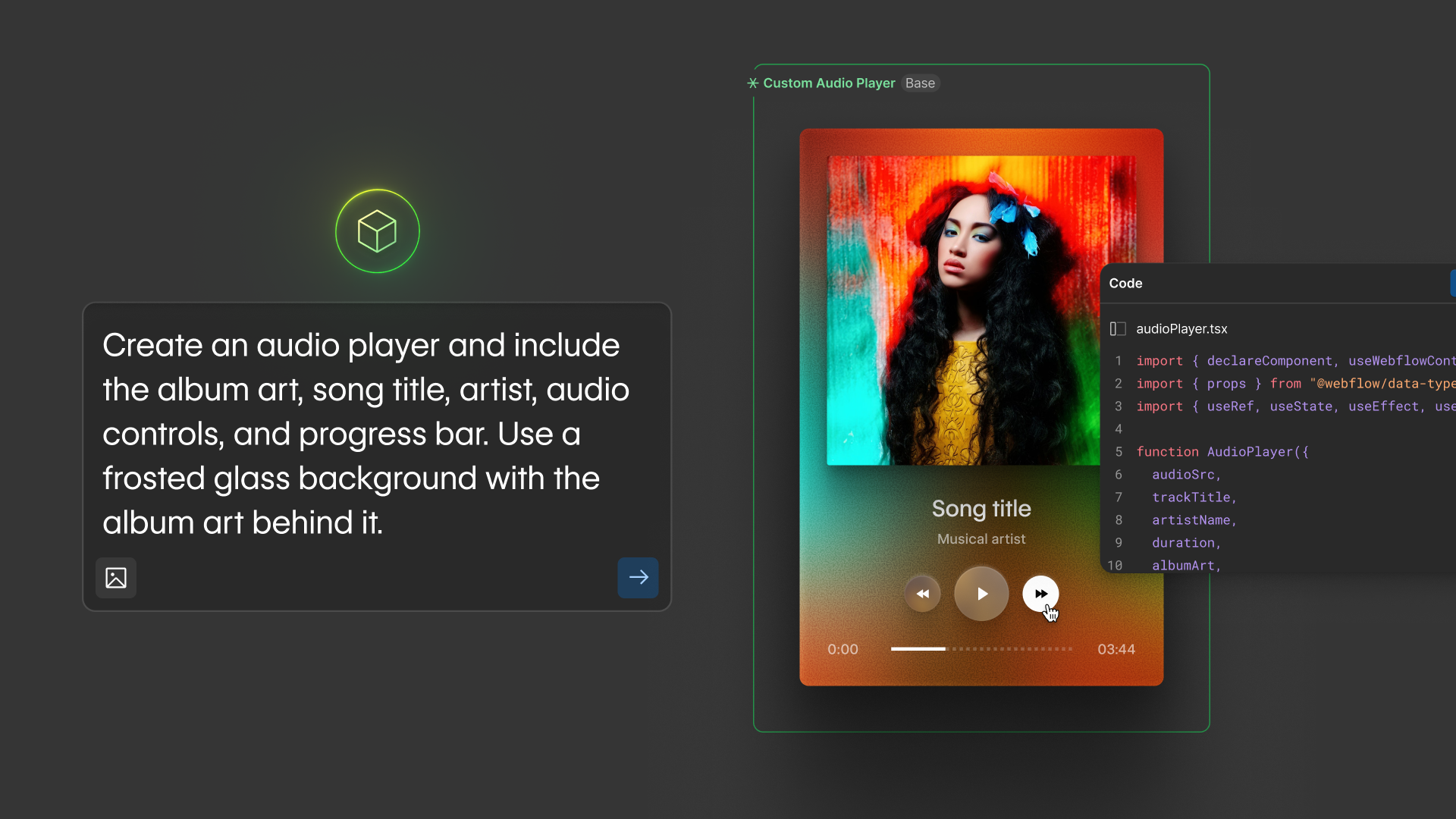

Iteration 06 // April 30, 2026AI Code Components. Bound the Agent's Problem.

In late April, we GA'd a product called AI Code Components. It's the successor to App Gen and the surface that gives our AI Assistant something stable to stand on.

The pitch in one sentence. Customers describe an interactive component in natural language (a pricing calculator, a job board, an event calendar, a search filter) and the AI Assistant generates a production-ready React component that lives natively on the Webflow canvas, inherits the customer's design system, and can be reused across any page in their workspace.

Read that pitch carefully. It is the App Gen pitch with two changes. Component instead of app, and on the canvas instead of in a sandbox. Those two changes are the entire architectural difference. And they're the reason this version works where the previous one didn't.

To understand why, you have to understand the architecture, which is the part I keep coming back to. AICC is built on what our engineering team calls islands architecture.

The AI doesn't manipulate the whole Webflow design tree, which is a complex, proprietary structure where mistakes ripple in unpredictable ways. The AI generates React code inside an isolated component, which is a well-understood domain that large language models are unusually good at.

The technical lead on the project articulated it like this.

"Having each code component be an island, you feel safer than you do working in a tool like [Lovable, v0, etc.]. I'm only impacting this little widget that fits into the larger picture."

That phrase, safer island, became the value prop we'd been gravitating towards for two years and only landed in late 2025. It is the answer to the trust gap from Iteration 2. The agent isn't asking the customer to trust it with the whole site. It's asking the customer to trust it with one bounded, isolated, reversible primitive. Trust scales with surface area. Shrink the surface area, and trust gets cheap.

The other thing that makes AICC work, and the part the team almost didn't ship, is that the components AICC produces inherit the customer's world. The AI Assistant extracts brand colors, fonts, and spacing automatically. The components consume the customer's CMS collections. The components are reusable, composable, and shareable across every site in the workspace via exported libraries. The component isn't being generated in isolation. It's being generated into the customer's existing system.

The team that shipped this landed something I now think most of the rest of the industry hasn't figured out yet.

Bound the agent's problem.

Reliability scales inversely with surface area. Compose small reliable bets into a system. Demand reliability across the whole site, and you have a demo.

This is the lesson I think most product leaders building AI are still in front of, not behind. The temptation when you're shipping an AI feature is to give the agent the biggest possible playground. Let it generate anything, let it touch anything, let it solve any problem the customer brings.

That's the trap.

The agent that wins is the one operating inside a deliberately bounded primitive (a component, a recommendation, a draft, a single CMS item) where the customer can preview, accept, reject, undo.

The whole-site agent comes later, and only as an orchestrator of bounded agents rather than as a single big surface.

The version of AI Assistant we're running in limited Enterprise beta as of this writing (six co-development customers across SaaS, healthcare, events, and financial services) is the synthesis of everything in this post.

It's the AI Assistant we tried to ship at WFC 2025, now finally working, because it generates AICCs (bounded), respects GRACE permissions (trusted), inherits the customer's brand context (in their world), and lives directly in the canvas the customer is already editing (the right surface). Four versions in, the same product finally compounds.

07 // May 2026Convergence. The Journey Finally Closes.

The post you are reading is being written the week the journey finally closes.

In May 2026, Phase 1 of our limited rollout, the new AI Assistant (with AI Code Components as its primary code-generation primitive) became available on every site created via AI Site Builder. Not just to six co-development Enterprise customers. To every customer whose site started with AI Site Builder, and soon to every customer.

That sounds like a routine GA milestone. It isn't. It's the first moment in three years of work where the agentic surfaces a customer encounters at Webflow are continuous.

A customer who starts a site today opens AISB and types a prompt. They get a multi-page, brand-aware, animated site in under two minutes. Then they open the Designer to edit the site, and the AI Assistant is waiting for them. Same agent identity, same brand context, same design system awareness. Iteration 6 picked up where Iteration 5 left off. When they need a pricing calculator, a job board, or an event filter, they ask the AI Assistant for it, and it generates an AI Code Component bounded inside its own island on the canvas. The same agent, the same world, three different moments of help.

That continuity is what becoming agentic actually looks like at the customer level. Not a launch. Not a single product. A series of bounded agents, each one well-scoped, each one trustable, each one inheriting the customer's world, composed into a continuous capability.

The platform that evolved underneath them is the subject of Part 2.

08 // The ReckoningWhat the Six Lessons Add Up To.

Read across the six iterations, the lessons compound into a single thesis I'd put in front of any team building an AI product right now.

- Your moat is the most dangerous place to start. The Innovator's Dilemma applies to AI. (AIDA.)

- A one-shot agent is a tool, not a platform. The agent has to come with the customer past the first prompt. (AISB v1.)

- Capability is not trust. Power without a control surface is unsellable. (AI Assistant v1.)

- Don't build the agent next door. If the customer's work lives in your product, the agent's output has to live there too. (App Gen.)

- Deepen the bounded agent before you replace it. Bounded scope compounds when you keep iterating inside the bounds. (AISB v2.)

- Bound the agent's problem. Reliability scales inversely with surface area. Compose small reliable bets. (AICC.)

I want to be honest that I learned each of these lessons in the worst possible order. AIDA's lesson cost us a year. AISB v1's lesson was the one we got right immediately after AIDA, and the success of its bounded one-shot bought us the credibility to keep investing while we figured the rest out. The first AI Assistant's lesson cost us a public moment in front of fifty thousand people. App Gen's lesson cost us a year of engineering effort on a separate sandbox we'd eventually pause. AISB v2's lesson is the one I'm most quietly proud of. The team had every reason to graduate the bounded agent into a platform replacement, and chose discipline instead. AICC's lesson is the one we built on top of all five, and only because the previous five lessons had been beaten into us.

That trajectory matters. Most of the AI product post-mortems I read are written as if the company in question had the lessons in advance. They didn't. Neither did we. The lessons came from shipping, failing, and being honest about which part of the failure was structural versus which part was just bad luck. The structural parts are the ones in this post. The bad-luck parts I'll keep to myself.

That trajectory (unbounded sidebar → bounded one-shot → continuous but ungoverned → adjacent and standalone → deeper bounded one-shot → bounded composable primitive) is what becoming agentic actually looks like. It is not a single launch. It is six iterations of the same product, each fixing what the previous one couldn't see, with a seventh moment, May 2026, where they finally compose into one continuous experience.

The next part of the series is about what we were building underneath all six iterations. The unsexy substrate work in 2025 and early 2026 that nobody outside the company would have voted to fund and that made the sixth iteration possible. Without it, we'd still be on Iteration 1.

Building The Plumbing.

The infrastructure bets that turn an AI product into a platform, and the reason the AI Assistant we ship today works where the earlier iterations didn't.